How do I document my Power Automate flows? Same forum question as Posts 1 and 2, third answer. This...

I Built a Flow That Fixes Failed Power Automate Flows

A failed flow sends an HTTP trigger. A Copilot Studio agent reads the run history, produces a plain-English diagnosis, and sends it for human approval. If the reviewer asks for a fix, the agent proposes one. If the reviewer approves it, the agent applies the fix, redeploys the flow, and resubmits the failed run to verify the result. All inside Power Automate.

This pattern only became practical once agents could inspect action-level run history programmatically instead of relying on run-level status alone.

This post walks through the approval-gated pattern behind it. The point is not full delegation. The point is controlled delegation.

Disclosure: We built Flow Studio MCP, the MCP server the agent uses to read action-level Power Automate data. The architecture pattern generalizes to any MCP server, but the specific tools referenced here are ours.

Why this exists

"Full delegation to AI agents still feels too early." That is the most common pushback I hear when I talk about using AI agents to operate Power Automate. And it is a fair concern. If the agent builds something wrong, or diagnoses an error incorrectly, or deploys a broken fix, you have a worse problem than you started with.

The answer is not to avoid delegation. The answer is to put approval gates at the stages where the agent's output matters. The key enabling piece behind this pattern is Flow Studio MCP. It gives agents access to action-level run history, which turns debugging from a manual portal activity into something a flow can orchestrate.

The pattern I landed on separates the agent's work into three phases (diagnose, propose fix, execute fix) and puts a human approval gate between each one. The agent does the heavy lifting. The human validates at two checkpoints. The agent only touches production after explicit approval.

The architecture

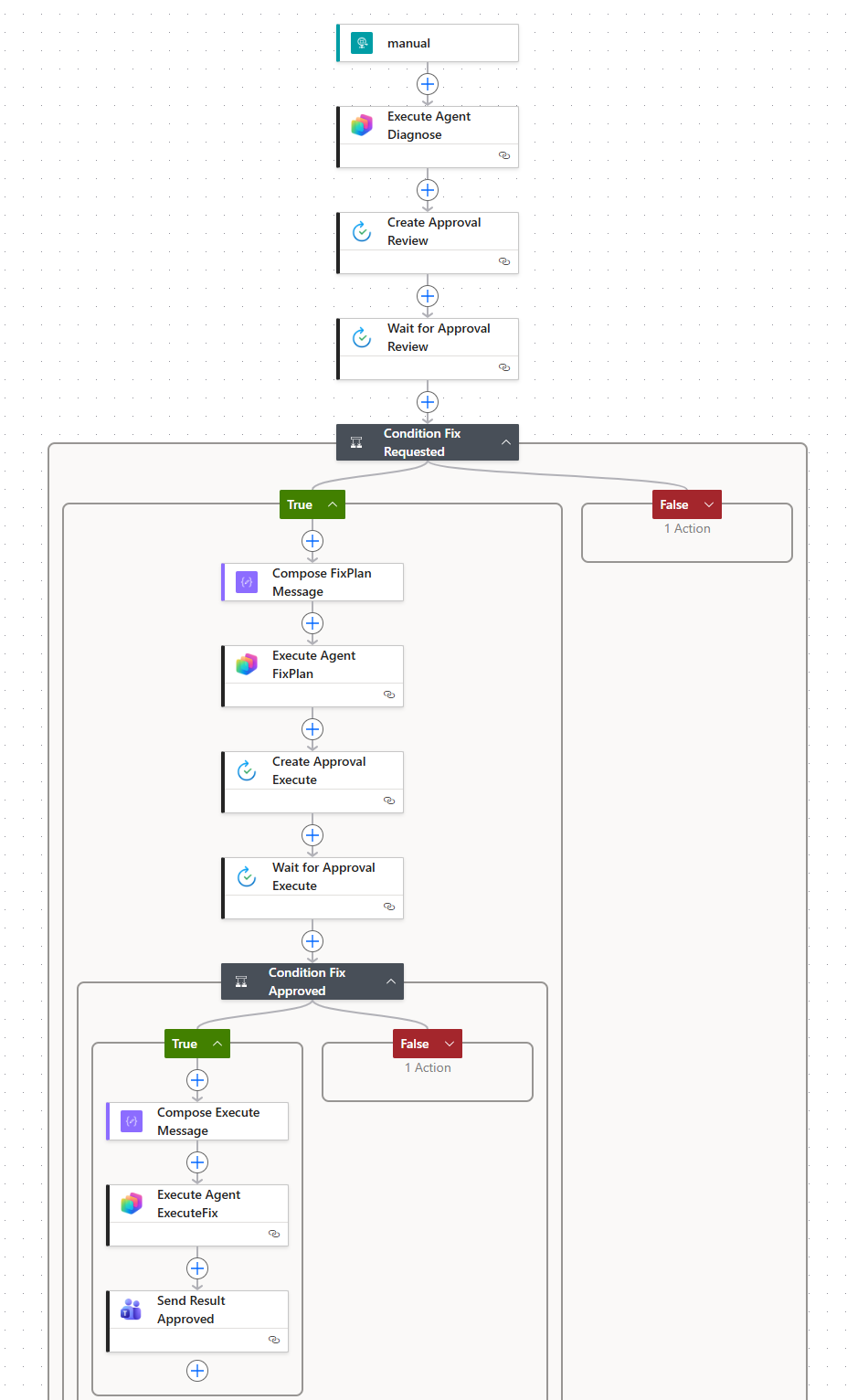

The complete flow in the Power Automate designer. HTTP trigger at the top, two condition branches (fix requested / fix approved), three Copilot Studio agent calls, two approval gates, and Teams notifications on every path.

The goal is to let the agent do the heavy work while stopping at every step where a wrong answer could cause damage.

How the handler gets triggered

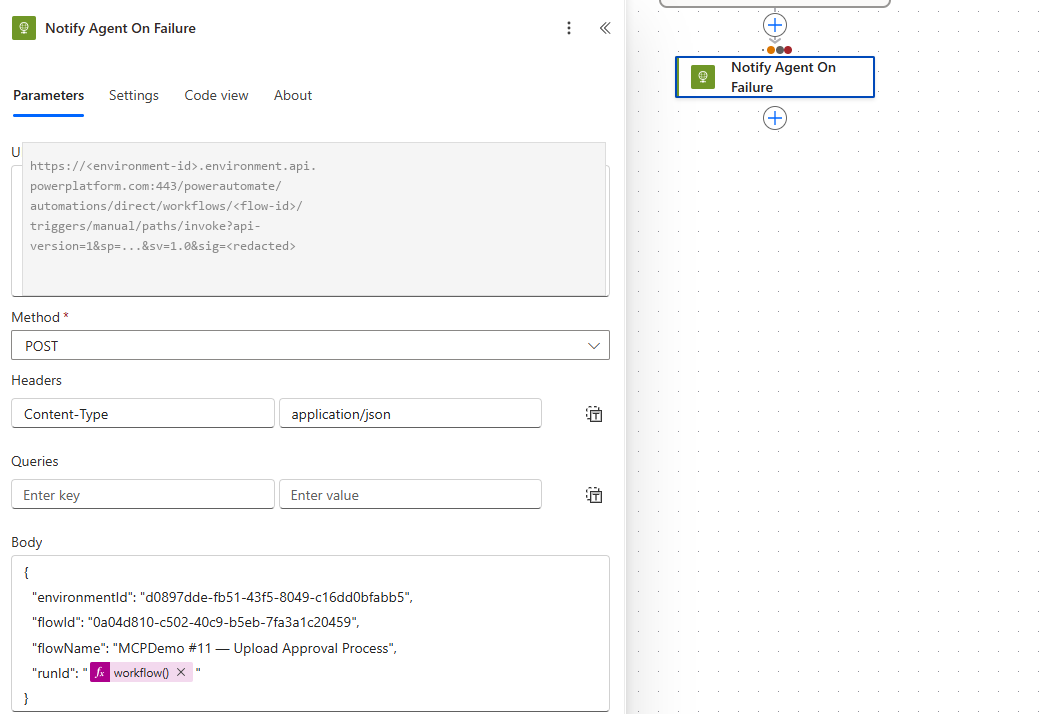

Power Automate does not have a built-in "when another flow fails, call this webhook" trigger. To wire it, add a Notify Agent On Failure HTTP action to the monitored flows using configure-run-after on failure. The action POSTs the flowId, flowName, runId, and environmentId to the handler's HTTP trigger URL. As usual lately, the AI agent wrote the pattern and inserted it into every flow I pointed it at.

The "Notify Agent On Failure" action added to the monitored flow's error path (red dots in the right panel indicate configure-run-after on failure). It POSTs to the handler's HTTP trigger URL with a JSON body containing the four required fields. The workflow() expression captures the current run ID dynamically. One action per flow you want to monitor.

The pipeline has four stages. The orchestration is handled inside Power Automate using the Copilot Studio connector to call the agent at each step.

- DIAGNOSE (automated): The flow triggers on HTTP (receives flowId, flowName, runId, environmentId). It calls a Copilot Studio agent via action

Execute Agent and wait(ExecuteCopilotAsyncV2). The agent uses two MCP tools:get_live_flow_run_error(per-action failure breakdown) andget_live_flow_run_action_outputs(inputs and outputs at any action, including loop iterations). Returns a plain-English diagnosis. - REVIEW (human approval gate): A Custom Response approval is sent to the flow maker. The approval body contains the agent's diagnosis. Options: "Request fix proposal" or "Dismiss".

- PROPOSE FIX (automated): If the reviewer requests a fix, the agent is called again with the diagnosis plus any comments the reviewer added. The agent proposes a fix plan. A second approval: "Approve - execute fix" or "Reject - do nothing".

- EXECUTE (automated, only after human approval): The agent calls

update_live_flowto apply the fix, resubmits the failed run to verify, and sends the result to Teams.

Three connectors total: Microsoft Copilot Studio, Approvals, and Teams.

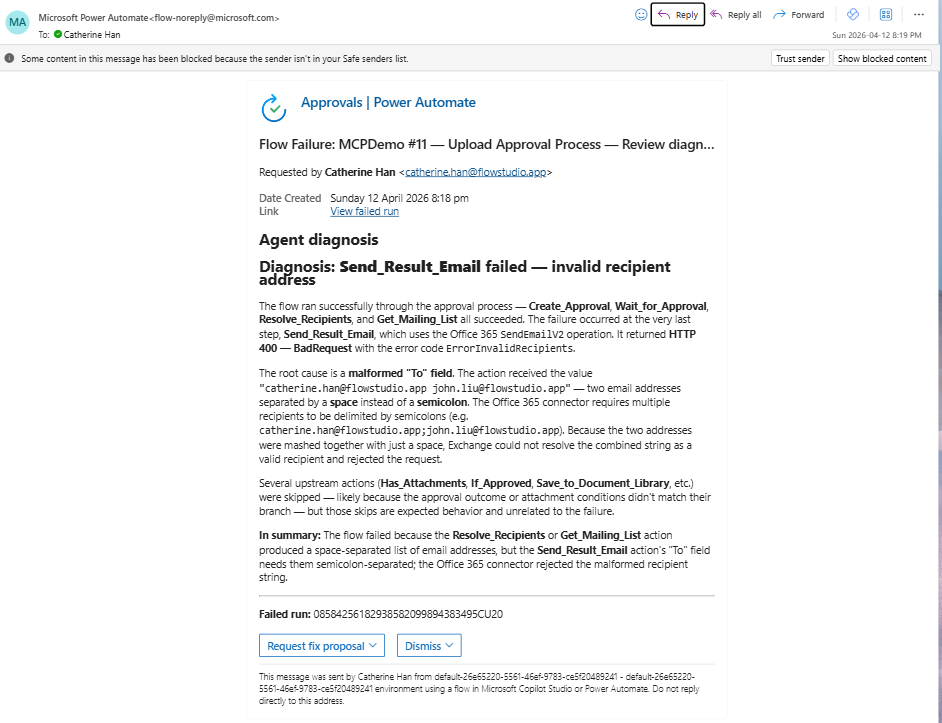

What the diagnosis looks like

The full agent diagnosis in the Power Automate approval portal. The agent traced the failure from Send_Result_Email back through the entire action chain, identified every action that succeeded, and pinpointed the root cause: a malformed "To" field with spaces instead of semicolons. Complete plain-English explanation with no raw JSON.

The value here is not that the agent found an error. It is that the diagnosis is readable enough for the flow owner to approve or reject without opening raw run JSON.

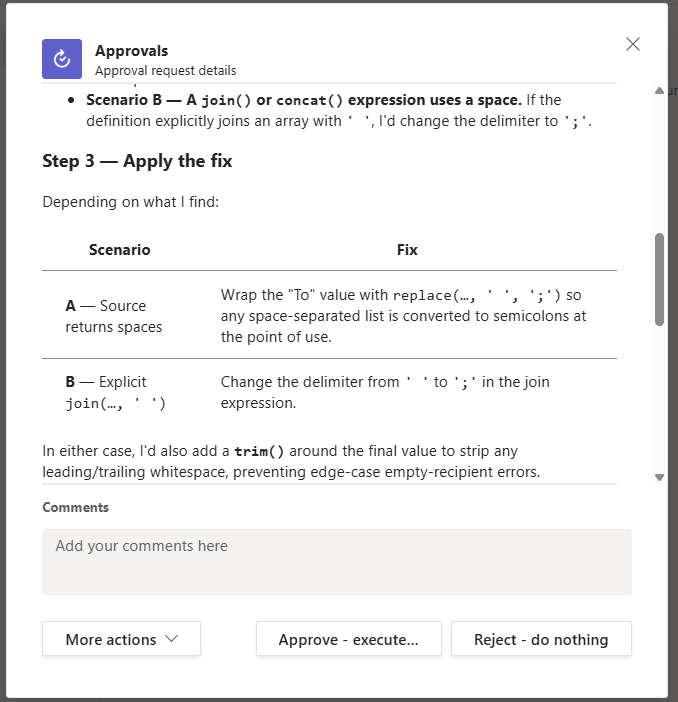

What the fix proposal looks like

The agent's fix proposal: Step 3 "Apply the fix" with two concrete scenarios (replace spaces with semicolons at point of use, or change the join delimiter upstream), plus a trim() recommendation for edge cases. "Approve - execute fix" and "Reject - do nothing" buttons at the bottom.

This is the key difference between a chat demo and a safe production workflow: the agent is not applying a fix blindly. It is presenting a constrained proposal for human approval.

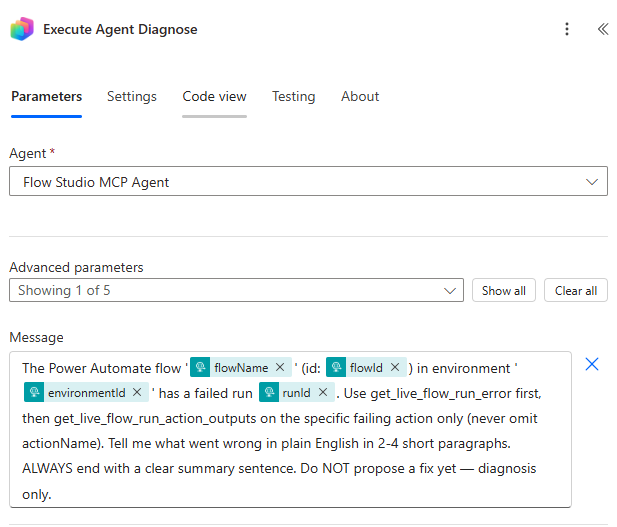

The Execute Agent Diagnose action inside Power Automate. The Message field contains the full prompt with dynamic content tokens (flowName, flowId, environmentId, runId) and all four prompt constraints visible: enforce actionName, require plain English, force a summary sentence, and restrict to diagnosis only.

Four prompt constraints that matter

1. Enforce the actionName parameter

The agent sometimes calls get_live_flow_run_action_outputs without specifying which action to inspect. When that happens, the tool returns the entire run's output data, which is too large to process. The prompt includes: "Use get_live_flow_run_action_outputs on the specific failing action only (never omit actionName)."

2. Force a summary even on large tool returns

When tool calls return large JSON, the agent sometimes passes the raw output as its "response" without summarizing. The prompt includes: "ALWAYS end with a clear summary sentence. Even if your tool calls return large data, you MUST produce a final text response summarizing the root cause."

3. Separate diagnosis from fix proposal

If you ask the agent to "diagnose and fix" in one prompt, it skips the diagnosis and jumps to a fix. The solution is architectural: split into two separate ExecuteCopilotAsyncV2 calls. Stage 1 gets "diagnosis only, do NOT propose a fix yet." Stage 3 receives the diagnosis as context and proposes the fix. The separation forces complete investigation before solutioning.

4. Pipe approval comments back to the agent

The reviewer often has context the agent does not. "The SharePoint list schema changed last week." "Ignore the Teams notification error, that channel was deprecated." The Stage 3 prompt includes: "The user also added this comment: [comment]. Take the comment into account." This creates a feedback loop where human domain knowledge shapes the fix proposal.

The security model

This flow gives the agent full read AND write access (including update_live_flow). That is appropriate for an IT-operated pipeline where the human reviewer is the flow maker themselves.

For a user-facing deployment where business users query the agent directly ("why didn't my flow run?"), you would restrict the agent to read-only tools only: untick update_live_flow, set_live_flow_state, and any write operations. The agent can still diagnose but cannot modify anything.

Try it

One more thing: this flow was built by an AI agent using Flow Studio MCP. If you share this blog post with your agent, it can build the same flow for you.

If you want to test this pattern, you do not need to build the whole thing first. Start with diagnosis only.

- Create a Copilot Studio agent

- Add Flow Studio MCP as a tool to the agent. Setup guide: learn.flowstudio.app/mcp-getting-started (Copilot Studio section).

- Call the agent from a flow.

- Return diagnosis

- Only then add execute step

The Copilot Studio connector is the piece most people do not know exists. It lets any Power Automate flow call any published Copilot Studio agent as an action.

Frequently asked questions

Can a Power Automate flow call a Copilot Studio agent?

Yes. Use the Microsoft Copilot Studio connector's ExecuteCopilotAsyncV2 action. It lets any Power Automate flow call any published Copilot Studio agent, passing a message and receiving the agent's response as text. This is the connector most Power Automate makers do not know exists.

Can a Copilot Studio agent fix a Power Automate flow?

Yes, if the agent has access to the right MCP tools. With Flow Studio MCP configured as a tool, the agent can call update_live_flow to modify a flow definition and resubmit_live_flow_run to verify the fix. The architecture in this post adds two human approval gates so the agent only executes fixes after explicit human sign-off.

What is ExecuteCopilotAsyncV2?

ExecuteCopilotAsyncV2 is the Power Automate action that calls a published Copilot Studio agent. It is part of the Microsoft Copilot Studio connector. You pass a message as input and receive the agent's response. The action supports long-running agent operations (the Async in the name) and works with agents that have MCP tools, knowledge sources, or custom topics configured.

What MCP tools does the agent need for Power Automate debugging?

The two critical tools are get_live_flow_run_error (returns a per-action failure breakdown, ordered outer-to-inner for root cause diagnosis) and get_live_flow_run_action_outputs (reads inputs and outputs at any action inside a run, including loop iterations). These expose runtime data that Microsoft's standard Power Platform admin API does not surface. Both are provided by Flow Studio MCP.

Is it safe to let an AI agent modify Power Automate flows?

It depends on the deployment model. For an IT-operated pipeline where the human reviewer is the flow maker, giving the agent write access (including update_live_flow) behind two approval gates is a reasonable pattern. For a user-facing deployment where business users query the agent directly, restrict the agent to read-only tools so it can diagnose but not modify. The approval-gated architecture in this post is designed for the first scenario.

About Flow Studio MCP: Flow Studio MCP is a Model Context Protocol server that gives AI agents action-level access to Power Automate. Listed on GitHub's awesome-copilot. Works with Microsoft Copilot Studio, GitHub Copilot, Claude, and any MCP-compatible agent.

Related reading:

- How to Plug a Custom MCP Server Into Microsoft Copilot Studio in 5 Minutes

- I Told a Reddit User to Stop Learning Power Automate. Here's Why.

- Debugging Power Automate Flows with an AI Agent

Catherine Han, Flow Studio